Amazon OpenSearch Service not too long ago introduced Multi-AZ with standby, a brand new deployment choice for managed clusters that permits 99.99% availability and constant efficiency for business-critical workloads. With Multi-AZ with standby, clusters are resilient to infrastructure failures like {hardware} or networking failure. This feature supplies improved reliability and the additional advantage of simplifying cluster configuration and administration by implementing greatest practices and decreasing complexity.

On this put up, we share how Multi-AZ with standby works beneath the hood to attain excessive resiliency and constant efficiency to fulfill the 4 9s.

Background

One of many ideas in designing extremely obtainable methods is that they must be prepared for impairments earlier than they occur. OpenSearch is a distributed system, which runs on a cluster of cases which have totally different roles. In OpenSearch Service, you may deploy knowledge nodes to retailer your knowledge and reply to indexing and search requests, you can too deploy devoted cluster supervisor nodes to handle and orchestrate the cluster. To offer excessive availability, one widespread strategy for the cloud is to deploy infrastructure throughout a number of AWS Availability Zones. Even within the uncommon case {that a} full zone turns into unavailable, the obtainable zones proceed to serve visitors with replicas.

Once you use OpenSearch Service, you create indexes to carry your knowledge and specify partitioning and replication for these indexes. Every index is comprised of a set of main shards and nil to many replicas of these shards. Once you moreover use the Multi-AZ characteristic, OpenSearch Service ensures that main shards and reproduction shards are distributed in order that they’re in several Availability Zones.

When there may be an impairment in an Availability Zone, the service would scale up in different Availability Zones and redistribute shards to unfold out the load evenly. This strategy was reactive at greatest. Moreover, shard redistribution throughout failure occasions causes elevated useful resource utilization, resulting in elevated latencies and overloaded nodes, additional impacting availability and successfully defeating the aim of fault-tolerant, multi-AZ clusters. A simpler, statically secure cluster configuration requires provisioning infrastructure to the purpose the place it may proceed working accurately with out having to launch any new capability or redistribute any shards even when an Availability Zone turns into impaired.

Designing for top availability

OpenSearch Service manages tens of hundreds of OpenSearch clusters. We’ve gained insights into which cluster configurations like {hardware} (knowledge or cluster-manager occasion varieties) or storage (EBS quantity varieties), shard sizes, and so forth are extra resilient to failures and may meet the calls for of widespread buyer workloads. A few of these configurations have been included in Multi-AZ with standby to simplify configuring the clusters. Nonetheless, this alone isn’t sufficient. A key ingredient in attaining excessive availability is sustaining knowledge redundancy.

Once you configure a single reproduction (two copies) to your indexes, the cluster can tolerate the lack of one shard (main or reproduction) and nonetheless get better by copying the remaining shard. A two-replica (three copies) configuration can tolerate failure of two copies. Within the case of a single reproduction with two copies, you may nonetheless maintain knowledge loss. For instance, you may lose knowledge if there’s a catastrophic failure in a single Availability Zone for a chronic length, and on the similar time, a node in a second zone fails. To make sure knowledge redundancy always, the cluster enforces a minimal of two replicas (three copies) throughout all its indexes. The next diagram illustrates this structure.

The Multi-AZ with standby characteristic deploys infrastructure in three Availability Zones, whereas preserving two zones as lively and one zone as standby. The standby zone presents constant efficiency even throughout zonal failures by making certain similar capability always and through the use of a statically secure design with none capability provisioning or knowledge actions throughout failure. Throughout regular operations, the lively zone serves coordinator visitors for learn and write requests and shard question visitors, and solely replication visitors goes to the standby zone. OpenSearch makes use of synchronous replication protocol for write requests, which by design has zero replication lag, enabling the service to instantaneously promote a standby zone to lively within the occasion of any failure in an lively zone. This occasion is known as a zonal failover. The beforehand lively zone is demoted to the standby mode and restoration operations to carry the state again to wholesome start.

Why zonal failover is essential however exhausting to do proper

A number of nodes in an Availability Zone can fail on account of all kinds of causes, like {hardware} failures, infrastructure failures like fiber cuts, energy or thermal points, or inter-zone or intra-zone networking issues. Learn requests might be served by any of the lively zones, whereas write requests must be synchronously replicated to all copies throughout a number of Availability Zones. OpenSearch Service orchestrates two modes of failovers: learn failovers and the write failovers.

The primarily objectives of learn failovers are excessive availability and constant efficiency. This requires the system to continually monitor for faults and shift visitors away from the unhealthy nodes within the impacted zone. The system takes care of dealing with the failovers gracefully, permitting all in-flight requests to complete whereas concurrently shifting new incoming visitors to a wholesome zone. Nonetheless, it’s additionally attainable for a number of shard copies throughout each lively zones to be unavailable in instances of two node failures or one zone plus one node failure (also known as double faults), which poses a threat to availability. To unravel this problem, the system makes use of a fail-open mechanism to serve visitors off the third zone whereas it might nonetheless be in a standby mode to make sure the system stays extremely obtainable. The next diagram illustrates this structure.

An impaired community gadget impacting inter-zone communication may cause write requests to considerably decelerate, owing to the synchronous nature of replication. In such an occasion, the system orchestrates a write failover to isolate the impaired zone, reducing off all ingress and egress visitors. Though with write failovers the restoration is instant, it ends in all nodes together with its shards being taken offline. Nonetheless, after the impacted zone is introduced again after community restoration, shard restoration ought to nonetheless have the ability to use unchanged knowledge from its native disk, avoiding full phase copy. As a result of the write failover ends in the shard copy to be unavailable, we train write failovers with excessive warning, neither too often nor throughout transient failures.

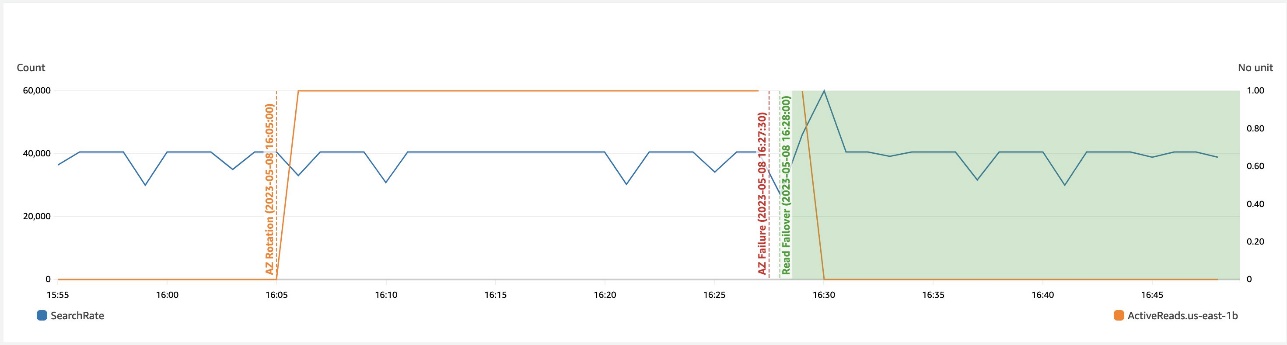

The next graph depicts that in a zonal failure, computerized learn failover prevents any affect to availability.

The next depicts that in a networking slowdown in a zone, write failover helps get better availability.

To make sure that the zonal failover mechanism is predictable (capable of seamlessly shift visitors throughout an precise failure occasion), we commonly train failovers and hold rotating lively and standby zones even throughout regular state. This not solely verifies all community paths, making certain we don’t hit surprises like clock skews, stale credentials, or networking points throughout failover, however it additionally retains progressively shifting caches to keep away from chilly begins on failovers, making certain we ship constant efficiency always.

Bettering the resiliency of the service

OpenSearch Service makes use of a number of ideas and greatest practices to extend reliability, like computerized detection and sooner restoration from failure, throttling extra requests, fail quick methods, limiting queue sizes, shortly adapting to fulfill workload calls for, implementing loosely coupled dependencies, constantly testing for failures, and extra. We talk about just a few of those strategies on this part.

Automated failure detection and restoration

All faults get monitored at a minutely granularity, throughout a number of sub-minutely metrics knowledge factors. As soon as detected, the system routinely triggers a restoration motion on the impacted node. Though most courses of failures mentioned to date on this put up consult with binary failures the place the failure is definitive, there may be one other sort of failure: non-binary failures, termed grey failures, whose manifestations are refined and normally defy fast detection. Sluggish disk I/O is one instance, which causes efficiency to be adversely impacted. The monitoring system detects anomalies in I/O wait occasions, latencies, and throughput, to detect and exchange a node with gradual I/O. Sooner and efficient detection and fast restoration is our greatest guess for all kinds of infrastructure failures past our management.

Efficient workload administration in a dynamic setting

We’ve studied workload patterns that trigger the system both to be overloaded with too many requests, maxing out CPU/reminiscence, or just a few rogue queries that may that both allocate big chunks of reminiscence or runaway queries that may exhaust a number of cores, both degrading the latencies of different essential requests or inflicting a number of nodes to fail because of the system’s sources operating low. A number of the enhancements on this course are being performed as part of search backpressure initiatives, beginning with monitoring the request footprint at numerous checkpoints that stops accommodating extra requests and cancels those already operating in the event that they breach the useful resource limits for a sustained length. To complement backpressure in visitors shaping, we use admission management, which supplies capabilities to reject a request on the entry level to keep away from doing non-productive work (requests both outing or get cancelled) when the system is already run excessive on CPU and reminiscence. A lot of the workload administration mechanisms have configurable knobs. Nobody measurement suits all workloads, subsequently we use Auto-Tune to manage them extra granularly.

The cluster supervisor performs essential coordination duties like metadata administration and cluster formation, and orchestrates just a few background operations like snapshot and shard placement. We added a activity throttler to manage the speed of dynamic mapping updates, snapshot duties, and so forth to forestall overwhelming it and to let essential operations run deterministically on a regular basis. However what occurs when there is no such thing as a cluster supervisor within the cluster? The subsequent part covers how we solved this.

Decoupling essential dependencies

Within the occasion of cluster supervisor failure, searches proceed as typical, however all write requests begin to fail. We concluded that permitting writes on this state ought to nonetheless be protected so long as it doesn’t must replace the cluster metadata. This alteration additional improves the write availability with out compromising knowledge consistency. Different service dependencies have been evaluated to make sure downstream dependencies can scale because the cluster grows.

Failure mode testing

Though it’s exhausting to imitate all types of failures, we depend on AWS Fault Injection Simulator (AWS FIS) to inject widespread faults within the system like node failures, disk impairment, or community impairment. Testing with AWS FIS commonly in our pipelines helps us enhance our detection, monitoring, and restoration occasions.

Contributing to open supply

OpenSearch is an open-source, community-driven software program. A lot of the modifications together with the excessive availability design to assist lively and standby zones have been contributed to open supply; in actual fact, we comply with an open-source first growth mannequin. The basic primitive that permits zonal visitors shift and failover is predicated on a weighted visitors routing coverage (lively zones are assigned weights as 1 and standby zones are assigned weights as 0). Write failovers use the zonal decommission motion, which evacuates all visitors from a given zone. Resiliency enhancements for search backpressure and cluster supervisor activity throttling are a number of the ongoing efforts. Should you’re excited to contribute to OpenSearch, open up a GitHub difficulty and tell us your ideas.

Abstract

Efforts to enhance reliability is a unending cycle as we proceed to study and enhance. With the Multi-AZ with standby characteristic, OpenSearch Service has built-in greatest practices for cluster configuration, improved workload administration, and achieved 4 9s of availability and constant efficiency. OpenSearch Service additionally raised the bar by constantly verifying availability with zonal visitors rotations and automatic exams by way of AWS FIS.

We’re excited to proceed our efforts into bettering the reliability and fault tolerance even additional and to see what new and present options builders can create utilizing OpenSearch Service. We hope this results in a deeper understanding of the correct degree of availability based mostly on the wants of your enterprise and the way this providing achieves the supply SLA. We might love to listen to from you, particularly about your success tales attaining excessive ranges of availability on AWS. When you’ve got different questions, please go away a remark.

In regards to the authors

Bukhtawar Khan is a Principal Engineer engaged on Amazon OpenSearch Service. He’s excited by constructing distributed and autonomous methods. He’s a maintainer and an lively contributor to OpenSearch.

Bukhtawar Khan is a Principal Engineer engaged on Amazon OpenSearch Service. He’s excited by constructing distributed and autonomous methods. He’s a maintainer and an lively contributor to OpenSearch.

Gaurav Bafna is a Senior Software program Engineer engaged on OpenSearch at Amazon Internet Providers. He’s fascinated about fixing issues in distributed methods. He’s a maintainer and an lively contributor to OpenSearch.

Gaurav Bafna is a Senior Software program Engineer engaged on OpenSearch at Amazon Internet Providers. He’s fascinated about fixing issues in distributed methods. He’s a maintainer and an lively contributor to OpenSearch.

Murali Krishna is a Senior Principal Engineer at AWS OpenSearch Service. He has constructed AWS OpenSearch Service and AWS CloudSearch. His areas of experience embody Info Retrieval, Giant scale distributed computing, low latency actual time serving methods and many others. He has huge expertise in designing and constructing internet scale methods for crawling, processing, indexing and serving textual content and multimedia content material. Previous to Amazon, he was a part of Yahoo!, constructing crawling and indexing methods for his or her search merchandise.

Murali Krishna is a Senior Principal Engineer at AWS OpenSearch Service. He has constructed AWS OpenSearch Service and AWS CloudSearch. His areas of experience embody Info Retrieval, Giant scale distributed computing, low latency actual time serving methods and many others. He has huge expertise in designing and constructing internet scale methods for crawling, processing, indexing and serving textual content and multimedia content material. Previous to Amazon, he was a part of Yahoo!, constructing crawling and indexing methods for his or her search merchandise.

Ranjith Ramachandra is a Senior Engineering Supervisor engaged on Amazon OpenSearch Service. He’s obsessed with extremely scalable distributed methods, excessive efficiency and resilient methods.

Ranjith Ramachandra is a Senior Engineering Supervisor engaged on Amazon OpenSearch Service. He’s obsessed with extremely scalable distributed methods, excessive efficiency and resilient methods.

Rohin Bhargava is a Sr. Product Supervisor with the Amazon OpenSearch Service group. His ardour at AWS is to assist prospects discover the right mix of AWS providers to attain success for his or her enterprise objectives.

Rohin Bhargava is a Sr. Product Supervisor with the Amazon OpenSearch Service group. His ardour at AWS is to assist prospects discover the right mix of AWS providers to attain success for his or her enterprise objectives.